Different Channel, Different Video, Similar Patterns

What is this blogpost about?

This is another addition to the blogpost series “Can This Go Live”. The first blogpost outlining the series’ focus and the intended audience is here.

What did I stumble upon?

About the video

- Platform: YouTube

- Title: Conan & Matt Gourley Have Beef With Beets | Conan O'Brien Needs A Fan

- Creator: Team Coco

About my interest in the video: One of my friends is incredibly funny. In 2010, in the midst of his contrarian takes like “Modern Family is a funnier show than Friends”, he began posting frequently about Conan O’Brien. Because I found my friend incredibly funny, I started consuming Conan’s bits. Since then, I’ve been a big fan and regularly listen to his bits on YouTube.

About how I stumbled upon the examples

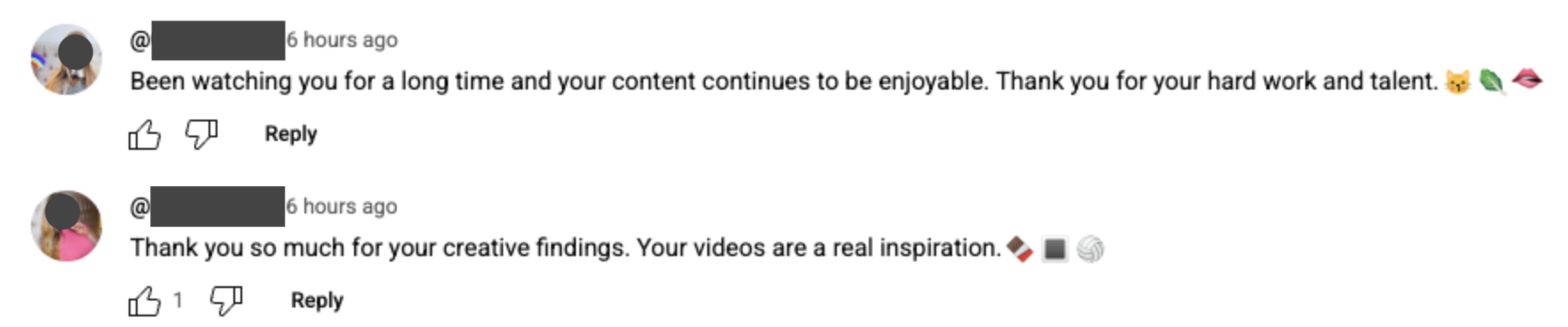

After publishing the first two blogposts, I continued my routine scrolling of comments. While watching the latest video by Team Coco, I noticed patterns similar to those previously described. Sorting by “Newest first”, I came across 2 accounts (G1).

Near-Duplicate Account Analysis

The G1 accounts posted comments (i) with sentiment of gratefulness, (ii) similar structure of 2 sentences + 3 emojis, (iii) gibberish emoji sequences. Furthermore, one account in this video and another in the previous Emergency Awesome video have the same profile picture.

| Attribute | Team Coco video | Emergency Awesome video |

|---|---|---|

| Account Figure | Figure 2 | Figure 3 |

| Profile Picture | Identical (P) | Identical (P) |

| Account Name | Obfuscated A | Obfuscated B |

| Description | Duplicate X1, X2 links | Duplicate X1, X2 links |

| Creation Date | 2025 July 3 | 2025 July 3 |

Conclusion: These are "near-duplicate" accounts due to the same picture, similar usernames, identical links, and identical creation dates.

Summary of Problematic Activity

Based on exploration, there is a group of coordinated, inauthentic, near-duplicate accounts acting to (1) direct users to on-platform accounts and (2) then direct users to off-platform products (e.g., beacons.ai). This multi-layered redirection minimizes detection while driving sentiment to malicious services.

Thoughts & Next Steps

I looked through YouTube’s Creator Insider channel for videos regarding spam mitigation. Based on my understanding, mitigation efforts bring spam asymptotically closer to 0 but the issue is evolving.

Key Questions for Mitigation

- Scale: How many coordinated networks are being operated? How many accounts?

- Impact: What is the network's targeting approach (channel-level or video-level)?

- Mitigation: What channel-level signals are used? How effective is using profile pictures as a signal?

- Systems: Can workflows be run more frequently? Can tools handle nuanced edge cases better?

- Tools: Are new tools needed (e.g., alerts for high profile picture reuse)?